selenium爬虫项目(3)——微博社交信息获取 Introduction 最近事好像又挺多,也不知道在忙啥。本来计划周末写完这个大作业的,然而昨天被抓去校运会拍了一天照,晚上回来开始写,基本上把信息获取部分都给完成了,剩下的只有信息的可视化了,这一篇先介绍微博社交信息的部分,下一篇写动态信息的获取。

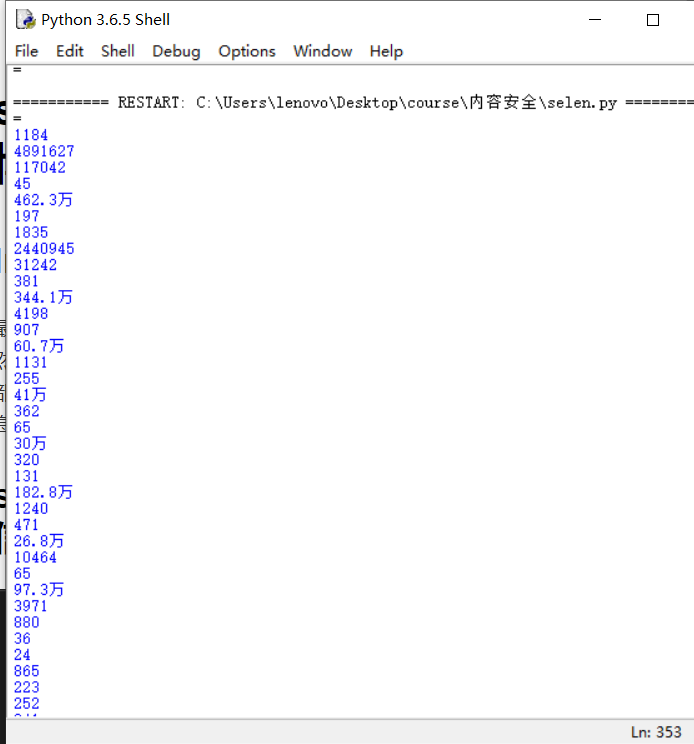

step1 获取关注列表和粉丝列表前十个的信息 Code: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 from selenium import webdriverimport timeimport sysdict .fromkeys(range (0x10000 ,sys.maxunicode+1 ),0xfffd )if __name__ == '__main__' :'C:\\Users\\lenovo\\Anaconda3\\Lib\\site-packages\\chromedriver.exe' 'https://www.baidu.com' )try :'//*[@id="kw"]' ).click()'//*[@id="kw"]' ).send_keys('微博' )3 )'//*[@id="su"]' ).click()2 )'//*[@id="2"]/div/div[1]/h3/a' ).click()10 )for handle in handles:if handle!=driver.current_window_handle:"window.scrollBy(0,3000)" )5 )'//*[@id="app"]/div[1]/div[1]/div[2]/div[1]/div/div/div[3]/div[1]/div/a[1]' ).click()5 )'//*[@id="app"]/div[4]/div[1]/div/div[2]/div/div/div[5]/a[1]' ).click()for handle in handles:if handle!=driver.current_window_handle:10 )"loginname" ).click()"loginname" ).send_keys("你的账号" )'//*[@id="pl_login_form"]/div/div[3]/div[2]/div/span' ).click()'//*[@id="pl_login_form"]/div/div[3]/div[2]/div/input' ).send_keys('你的密码' )5 )'W_btn_a' ).click()5 )'//*[@id="dmCheck"]' ).click()1 )'send_dm_btn' ).click()20 )'//*[@id="plc_top"]/div/div/div[2]/input' ).click()20 )'//*[@id="plc_top"]/div/div/div[2]/input' ).send_keys("等风" )10 )'//*[@id="plc_top"]/div/div/div[2]/a' ).click()2 )'/html/body/div[1]/div[2]/ul/li[2]/a' ).click()'//*[@id="pl_user_feedList"]/div[2]/div[2]/div/a[1]' ).click()2 )for handle in handles:if handle!=driver.current_window_handle:10 )'//*[@id="Pl_Core_UserInfo__6"]/div[2]/div[1]/div/a/span' ).click()5 )for handle in handles:if handle!=driver.current_window_handle:10 )with open ("information1.txt" ,"w" ,encoding='utf-8' ) as f:str (source).translate(non_bmp_map))'//*[@id="Pl_Core_T8CustomTriColumn__50"]/div/div/div/table/tbody/tr/td[1]/a/strong' ).click() 3 )for i in range (1 ,11 ): f'//*[@id="Pl_Official_HisRelation__56"]/div/div/div/div[2]/div[1]/ul/li[{i} ]/dl/dd[1]/div[1]/a[1]' ).click()1 )1 :]for i in range (len (guanzhu)):1 )len (guanzhu)-1 -i])try :3 )try : '//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[1]/strong' ) print (guanzhu1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[2]/strong' ) print (fans1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[3]/strong' ) print (blogs.text)except :'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[1]/a/strong' )print (guanzhu1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[2]/a/strong' )print (fans1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[3]/a/strong' )print (blogs.text)'//*[@id="Pl_Core_UserInfo__6"]/div[2]/div[1]/div/a' ).click()3 )with open (f"关注_{i} .txt" ,"w" ,encoding='utf-8' ) as f: str (source).translate(non_bmp_map))1 )except :print ("no info" )2 )0 ])'//*[@id="Pl_Official_HisRelationNav__55"]/div/div[2]/div[1]/div/div/div/div/ul/li[2]/a' ).click() 5 )for i in range (1 ,11 ): f'//*[@id="Pl_Official_HisRelation__56"]/div/div/div/div[2]/div[1]/ul/li[{i} ]/dl/dd[1]/div[1]/a[1]' ).click()1 )1 :]for i in range (len (fans)):1 )len (fans)-1 -i])try : 3 )'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[1]/a/strong' ) print (guanzhu1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[2]/a/strong' ) print (fans1.text)'//*[@id="Pl_Core_T8CustomTriColumn__3"]/div/div/div/table/tbody/tr/td[3]/a/strong' ) print (blogs.text)'//*[@id="Pl_Core_UserInfo__6"]/div[2]/div[1]/div/a' ).click()3 )with open (f"粉丝_{i} .txt" ,"w" ,encoding='utf-8' ) as f: str (source).translate(non_bmp_map))1 )except :print ("no info" )2 )finally :30 )

Explanation 这一步总体来说需要注意很多地方,首先是窗口句柄的转换,需要进入不同用户的主页进行信息采集,这一步需要将当前窗口句柄设置好;其次对于不同类型的用户,官方账号和普通用户,它们主页的格式有所不同,需要同时对这两种类型的用户考虑,此外还有关注人数、粉丝数是否大于10的判断,这里我们为了简便,直接采用try-except语句处理。

End 由于时间原因,而且上面的过程基本上也都是体力活(界面的跳转和格式的混乱确实烦人),所以不作过多解释,将代码调通,能获取到关注列表和粉丝列表的关注数、点赞数、博文数和个人信息即可。